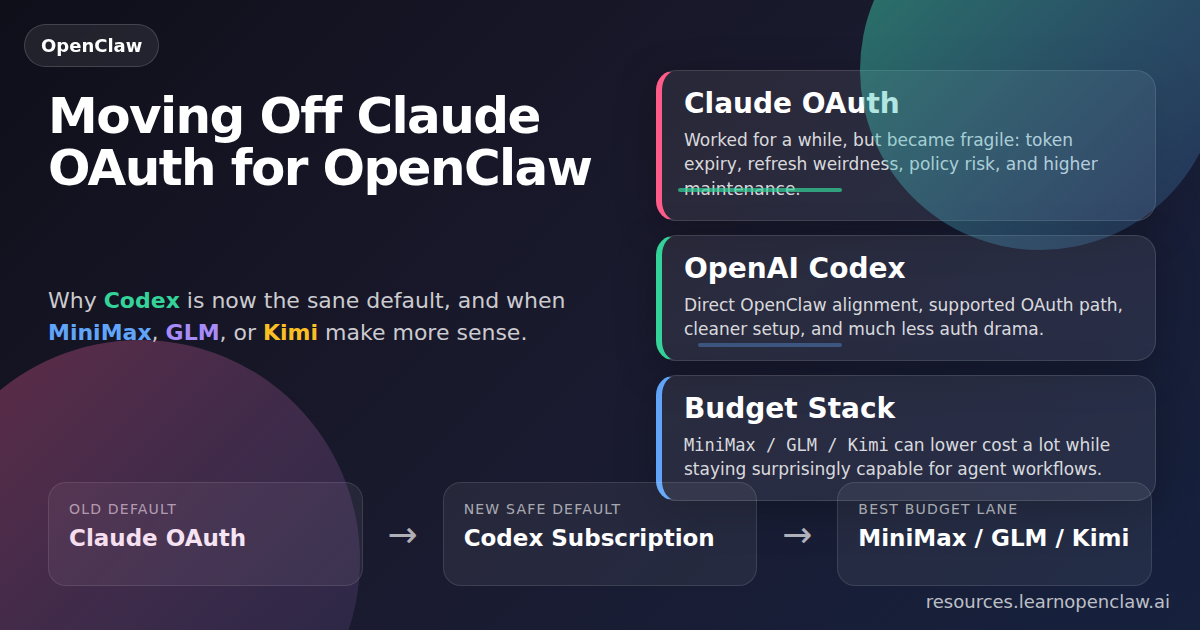

If you built your OpenClaw setup around Claude OAuth, the ground just moved under your feet.

For a while, Claude subscription auth was the obvious move: strong model quality, predictable monthly pricing, and less anxiety about uncapped API spend while agents were running. Then the cracks started showing. Tokens expired. Refresh behavior got weird. Policy signals got messy. And the bigger issue became painfully obvious: building your OpenClaw workflow around Claude OAuth is no longer stable enough to recommend as a primary setup.

That does not mean Claude is unusable with OpenClaw.

It means the old “Claude OAuth as the default subscription backbone for autonomous agents” approach is no longer the smart bet.

Short answer: If you are moving off Claude OAuth for OpenClaw, the best default recommendation is OpenAI Codex. If you want cheaper options, look hard at MiniMax, GLM, and Kimi. If you still want Claude, use Anthropic API for serious production work and treat Claude Proxy with OAuth as an experimental path, not your foundation.

What this article covers

- What changed with Claude OAuth

- Why Claude Proxy still exists but is a pain

- Why OpenAI Codex is now the cleanest recommendation

- Which cheaper subscription-style alternatives are actually worth trying

- Which setup makes sense by budget, risk tolerance, and workflow type

The problem Claude OAuth used to solve

People did not gravitate toward Claude OAuth because they were trying to be sneaky. They did it because it solved a real operational problem: spending control.

When you run OpenClaw as more than a chatbot—when it starts doing research, coding, browser tasks, cron jobs, content workflows, or multi-agent loops—you stop caring only about benchmark quality. You start caring about operational reality:

- Can I trust this to run unattended?

- Can I predict the monthly cost?

- Will it still be authenticated tomorrow?

- Will I wake up to completed work or a broken session and a cryptic 401?

Claude subscription auth gave people a psychologically comfortable answer: a flat-rate spend ceiling. That mattered. A lot.

But feeling safe and being production-safe are not the same thing.

What actually changed?

A few things happened at once, which is why so many people got confused.

1. Claude OAuth became a worse operational dependency

OAuth-based auth chains are fine when a provider explicitly supports them for your use case and the refresh flow is stable. They are a terrible foundation when tokens expire aggressively, refresh behavior is inconsistent, multiple tools can step on each other’s auth state, and the provider is clearly rethinking third-party usage.

OpenClaw’s OAuth documentation now draws a much clearer line around Anthropic. It distinguishes between:

- Anthropic API key for normal usage-based API billing

- Anthropic Claude CLI / subscription auth inside OpenClaw as a separate path

By contrast, OpenClaw’s docs are explicit that OpenAI Codex OAuth is supported for use in external tools like OpenClaw. That is a completely different confidence signal from “this still kind of works if you line up the moon phases correctly.”

2. The ecosystem started routing people away from Claude OAuth hacks

The current wave of articles, discussion threads, and OpenClaw community conversations all point in the same direction:

- Claude subscription access for third-party tools got less reliable

- People started looking for alternatives fast

- Codex, Kimi, MiniMax, GLM, and Qwen-based routes started getting serious attention

That does not settle the technical question by itself, but it tells you where the market is going: away from fragile Claude-OAuth-as-a-hack, and toward officially supported or operationally cleaner paths.

3. The old workaround became a maintenance burden

Even when Claude OAuth can still be made to work, the real question is:

Do you want your daily OpenClaw system to depend on a brittle workaround?

For most people, the answer should be no.

The decision in one table

| Option | Best for | What’s good | Main downside | My recommendation |

|---|---|---|---|---|

| OpenAI Codex | Most OpenClaw users who want a stable subscription path | Officially supported OAuth path, easy onboarding, strong coding/agent performance, direct OpenClaw alignment | Not always everyone’s favorite model stylistically | Best default choice |

| Claude Proxy + OAuth | Tinkerers who like living dangerously | Can still preserve Claude-ish workflows in some setups | Fragile, harder to configure, harder to recover, policy-sensitive | Not recommended as a primary setup |

| Anthropic API | People who explicitly want Claude in production | Cleanest way to keep using Claude, supported route | Usage-based billing, no subscription cap | Best Claude route for serious work |

| MiniMax | Budget-conscious users and high-volume workflows | Very good value, growing OpenClaw support, practical agent performance | Less prestige, may need more testing for your exact tasks | Best budget option to test first |

| GLM | Cost-sensitive users who want a credible alternative | Cheap, increasingly capable, supported through Z.AI in OpenClaw | Less mainstream, fewer people have strong intuition for it | Very strong value option |

| Kimi | Users comfortable with more explicit prompting | Good multi-step performance, strong value | Less forgiving than Claude, benefits from clearer instructions | Worth testing in a budget stack |

Yes, you can still use Claude Proxy with OAuth, but I would not build around it

Let’s be precise here. If you really want Claude-quality output inside OpenClaw without moving fully to Anthropic API billing, there are still proxy-style approaches people use. In practice, that usually means some combination of:

- reusing Claude CLI auth,

- routing through a Claude-compatible proxy,

- or stitching together setup-token / token-reuse flows that are more fragile than they look at first.

This is where a lot of people get trapped. They hear “still possible” and interpret it as “good default choice.” It is not.

Important: Claude Proxy with OAuth is still possible in some environments, but it is less stable, harder to configure, harder to explain to other people, and more annoying to recover when auth breaks. That makes it a hobbyist or advanced-user route—not the route I would recommend as the primary backbone of an OpenClaw system.

Why it is a bad default

- Harder to configure than mainstream provider setups

- Harder to teach to your team, audience, or future self

- Harder to recover when authentication breaks

- Less stable for long-running agents and cron jobs

- More vulnerable to provider policy changes

If you like plumbing auth flows for sport, fine. But if you want a reliable OpenClaw system you can use daily, document publicly, or automate with confidence, this is no longer the sane default.

The best default recommendation: OpenAI Codex

If you want the cleanest answer for most OpenClaw users in 2026, it is this:

Use OpenAI Codex.

Not because it is the only option. Because it has the best combination of:

- support,

- stability,

- ease of setup,

- subscription compatibility,

- and alignment with where OpenClaw is clearly strongest right now.

Why Codex wins: OpenClaw explicitly supports the Codex OAuth route, has a dedicated openai-codex/* provider path, and can reuse Codex CLI credentials cleanly when available. In plain English: it just works far more often than the Claude-OAuth workaround stack.

The partnership angle matters

OpenAI partnered directly with OpenClaw. That matters more than it sounds like it should. It means the integration path is aligned rather than adversarial. You are not trying to squeeze a consumer product into a workflow it was never meant to power. You are building on a path with actual product-level support behind it.

That usually translates into the things users actually care about:

- better maintenance,

- fewer weird breakages,

- clearer docs,

- and faster fixes when something goes wrong.

Why Codex beats Claude OAuth as a system choice

Claude may still win individual taste tests for some writing and reasoning tasks. Fine. But your primary OpenClaw model should be judged as a system dependency, not just a benchmark score.

And as a system dependency, Codex currently wins because it is:

- more officially supported in this workflow category,

- less fragile operationally,

- easier to onboard,

- easier to teach other people,

- and less likely to implode because of auth weirdness.

Subscription vs API: stop mixing these up

There are really two separate questions:

- Which model/provider should power OpenClaw?

- Which billing/auth model makes sense for how you use it?

If you specifically want a subscription-style setup because you value spend predictability, Codex is the cleanest replacement for old Claude OAuth workflows.

If you are okay with usage-based billing, the field gets wider. You can consider:

- Anthropic API

- OpenAI API

- MiniMax API

- Qwen Cloud

- GLM through Z.AI

- and other supported providers

But if your actual question is “what subscription should I use after moving off Claude OAuth?”, the answer is still Codex first.

Provider-by-provider breakdown

OpenAI Codex

Best for: Most OpenClaw users who want the cleanest supported subscription path.

- Explicitly supported OAuth usage in OpenClaw

- Dedicated

openai-codex/*route - Strong for coding, agents, and operational reliability

- Best choice for teaching and repeatable setups

My verdict: If you want one answer for “what should I use instead of Claude OAuth?”, this is it.

MiniMax

Best for: Budget-conscious users and high-volume workflows.

- Very strong value

- Good enough performance for lots of real agent work

- Documented in OpenClaw as a serious provider, not a side experiment

- Has OAuth and API-based routes depending on plan and region

My verdict: Probably the smartest low-cost option to test first.

GLM

Best for: Cost-sensitive users who still want a credible, capable model stack.

- Available in OpenClaw through the

zaiprovider - Bundled support for current GLM family models

- Increasingly viable for agent-style work

- Strong value if you are willing to test outside the default brand-name path

My verdict: One of the best value plays in the current OpenClaw ecosystem.

Kimi

Best for: People who want a lower-cost alternative and do not mind more explicit prompting.

- Shows up repeatedly in discussions as solid for multi-step tasks

- Good value for heavy usage

- Less forgiving than Claude, but often perfectly usable in agent flows

My verdict: Worth testing, especially as part of a budget stack with a stronger fallback model.

Anthropic API

Best for: People who explicitly want Claude in production.

- Cleanest supported way to keep using Claude

- Avoids the fragility of OAuth workarounds

- Better fit for serious unattended workflows than proxy hacks

Main tradeoff: usage-based billing instead of a flat subscription cap.

Qwen

Best for: Users evaluating broader provider options, especially via coding-plan or standard API routes.

- Still a serious supported provider in OpenClaw

- But OpenClaw docs explicitly note that Qwen OAuth has been removed

- Good reminder that building on fragile subscription-auth paths can age badly

My verdict: Relevant, but not the lead recommendation for this specific migration decision.

My recommended setups by budget

| Budget / goal | Primary | Fallback | Why this setup works |

|---|---|---|---|

| I want the easiest answer | OpenAI Codex | None required initially | Fastest path to a stable, teachable OpenClaw setup |

| I want low cost but still solid results | MiniMax | Codex | Run cheap most of the time, escalate hard tasks to Codex |

| I want the best value experiment stack | GLM or MiniMax | Kimi or Codex | Good way to lower spend without giving up a premium escape hatch |

| I still really want Claude | Anthropic API | Codex | Stable Claude route for serious work, with Codex as a strong secondary path |

| I enjoy tinkering and don’t mind breakage | Claude Proxy + OAuth | Codex | Only acceptable if you knowingly treat Claude Proxy as experimental infrastructure |

Quick setup examples inside OpenClaw

If you want this article to be practical instead of just opinionated, here are the relevant setup directions drawn from OpenClaw’s provider docs.

OpenAI Codex OAuth

$ openclaw onboard --auth-choice openai-codex

$ openclaw models auth login --provider openai-codexMiniMax Coding Plan / OAuth

$ openclaw onboard --auth-choice minimax-global-oauth

$ openclaw onboard --auth-choice minimax-cn-oauthGLM through Z.AI

$ openclaw onboard --auth-choice zai-coding-global

$ openclaw onboard --auth-choice zai-coding-cn

$ openclaw onboard --auth-choice zai-api-keyQwen Cloud

$ openclaw onboard --auth-choice qwen-api-key

$ openclaw onboard --auth-choice qwen-standard-api-keyWhat the top-ranking articles get wrong

After looking at the current pages ranking for this topic, most of them have the same three weaknesses:

They are too short

Most of the current content is basically: “Claude changed policy, here are three alternatives, good luck.” That is not enough. People need help making an actual decision, not just being reminded that options exist.

They blur together “possible,” “supported,” and “recommended”

These are not the same thing:

- Possible = you might be able to make it work

- Supported = the provider and toolchain clearly allow and maintain it

- Recommended = it is the best tradeoff for most real users

Claude Proxy with OAuth is still possible in some setups. OpenAI Codex is much closer to supported and recommended. That distinction matters.

They ignore operations

The right OpenClaw model choice is not just about raw intelligence. It is about auth stability, setup friction, billing predictability, maintenance burden, and whether you can trust the setup in long-running sessions, cron jobs, and agent loops.

My actual recommendation by user type

If you want the simplest, safest default

Choose OpenAI Codex.

It is the easiest path to explain, teach, reproduce, and trust.

If you want the best budget/performance balance

Try MiniMax first, then GLM, then Kimi depending on availability and preference.

If I were building a cost-efficient OpenClaw stack today, I would seriously test:

- Primary: MiniMax or GLM

- Fallback / premium lane: Codex

That gives you a practical mix of low cost and strong escalation capability.

If you absolutely want Claude-quality output

Use Anthropic API for production.

It is cleaner, more honest, and more stable than clinging to OAuth hacks.

If you like tinkering and don’t mind breakage

Sure, keep a Claude Proxy setup around. But do it with your eyes open. That setup now belongs in the category of interesting for advanced users, not the default thing people should build their OpenClaw life on.

Final verdict

If you are moving off Claude OAuth for OpenClaw, don’t overcomplicate this.

My primary recommendation: use OpenAI Codex.

It is the cleanest replacement because:

- OpenAI explicitly supports this kind of external-tool OAuth usage,

- OpenClaw has a dedicated Codex route,

- OpenAI and OpenClaw are directly aligned,

- and the setup is dramatically less stupid than trying to keep a fragile Claude OAuth stack alive.

My budget recommendation: test MiniMax, GLM, and Kimi.

Do not dismiss them just because they are not the default brand-name answer. For many OpenClaw workloads, they are already good enough to be genuinely useful.

My Claude recommendation: if you still want Claude, stop romanticizing OAuth. Use Anthropic API for serious production work.

The one-sentence version: If Claude OAuth used to be the clever default, OpenAI Codex is now the sane default.

Sources and research notes

- OpenClaw OAuth documentation, which explicitly supports OpenAI Codex OAuth for external tools and explains the modern auth split for Anthropic

- OpenClaw provider docs for OpenAI, MiniMax, GLM, and Qwen

- Recent public articles and community discussion pages about Claude subscription changes, token expiry, and alternative model stacks for OpenClaw-style workflows

The main pattern across the top pages is obvious: most are short, most focus on Claude policy drama rather than durable setup advice, and most mention alternatives without clearly separating what is merely possible from what is actually recommended. That gap is exactly what this article is meant to fix.